NEW New feature: Verify & block fake emails

We improve your ad performance by blocking click fraud and fake emails

Click fraud is costing advertisers billions in loses. Learn more here.

Click fraud is costing advertisers billions in loses. Learn more here.

Read our in-depth study by independent research firm Juniper Research.

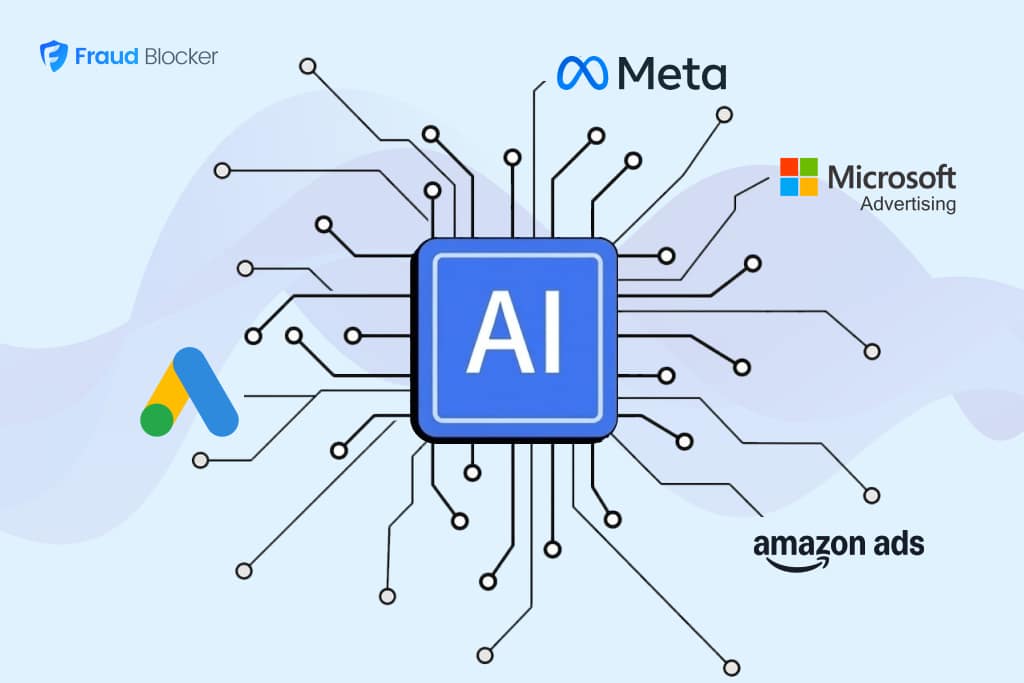

Ad fraud isn’t a new problem for advertisers. Click farms, bot traffic, and fake publisher sites have been trying to drain budgets for years. But AI is changing the speed, scale, and sophistication with which these schemes now operate.

Over the past year, we’ve seen a wave of AI-powered fraud that cuts across every part of the digital advertising ecosystem, from AI-generated MFA sites flooding programmatic bidstreams to mobile apps that silently hijacked 25 million devices.

The examples we’ll see in this article show how AI is making nearly every category of ad fraud faster to deploy, cheaper to scale, and harder to detect.

We’ll also see how this threatens advertisers on every level, not just big programmatic buyers.

AI ad fraud is the use of artificial intelligence or LLM systems to automate, scale, and conceal fraudulent activity in digital advertising. AI ad fraud builds on the same mechanics as traditional click fraud, but it’s drastically improved across three major facets: sophistication, scalability, and adaptability.

Fraud schemes are now more complex, easier to execute at scale, and much harder to catch.

Let’s see how.

Modern AI-powered fraud is built to look indistinguishable from real user behavior. Bots no longer just rapidly click; they scroll pages, vary their dwell time, move their mouse, and navigate between links the way a human would.

AI systems have also made it easier to bypass CAPTCHAs, spoof device IDs, and even simulate actions that used to be clear signs of human traffic.

The result is that ad fraud doesn’t look like ad fraud to basic detection systems until real damage is done.

The infrastructure cost of running a fraud operation used to be a limiting factor. Fraudsters would need to build hundreds of convincing fake publisher sites, create thousands of app profiles, and/or maintain a sophisticated bot network.

But not anymore.

Bad actors can now create convincing MFA sites, generate fake app listings, and produce synthetic content on a massive scale. That means more fraudsters can target a larger number of campaigns and potentially siphon even more than the 22% of ad budgets lost to fraud annually.

A particularly dangerous consequence here is that AI fraud systems don’t stay in place. They are constantly learning.

As detection tools identify and block fraudulent patterns, AI-driven schemes adapt their behavior to evade those same filters. They can adjust click timing, rotate identities, and change traffic signatures to stay ahead.

This creates a continuous loop where simple rule-based defenses are trying (and often failing) to catch up.

deepsee.io data on AI generated slop sites

According to Deepsee.io data shared with Digiday, AI-generated ‘slop’ sites surged 717% in a single year: growing from around 17,000 flagged domains in June 2024 to over 108,000 by May 2025. Of those, roughly 97,000 were classified specifically as having AI-driven content.

These were MFA sites created at scale using AI. They mimic real publishers, pass surface-level quality checks, and collect ad revenue from advertisers who have no idea their ads aren’t being seen by real people.

The Genisys scheme, dismantled by Google and IAS in early 2026, is another example of this. In the scheme, almost 500 AI-generated sites were built, each one receiving traffic through hijacked user devices.

The Genisys scheme didn’t just build MFA websites. It also used 115 Android apps to funnel traffic to them. The apps were ordinary utility tools like flashlight apps and PDF converters published on the Google Play Store. Once installed, hidden in-app browsers silently sent traffic to those MFA sites in the background, generating millions of fake bid requests across 25 million compromised devices.

To avoid detection, the apps spoofed their bundle IDs to appear as legitimate traffic from apps like Netflix and Instagram.

DoubleVerify has also reported a 3x increase in fraudulent iOS apps and a 6x increase in fraudulent Android apps in 2025 versus the five-year average, suggesting that AI is likely powering more of these types of schemes.

Read more: The Biggest Ad Fraud Scams in History. See how schemes like Genisys compare to the largest ad fraud operations ever uncovered, and what they cost advertisers.

AI has also enabled a new generation of bots that scroll pages, vary their dwell time, and simulate mouse movements in a way that closely copies human behavior. A 2025 report from mFilterIt found that 45–55% of app installs show anomalies like abnormally fast click-to-install times. But, these didn’t look like bot clicks; instead, they are consistent with how regular users install apps.

The same 2025 mFilterIt report found that 35% of affiliate traffic showed signs of bot involvement and misattributed organic actions. In practice, this means bots are completing form fills, app installs, and purchase events that get logged as real conversions in attribution systems.

The CRM records them, the campaign gets credit, and the budget shifts toward channels that appear to be performing. But the performance isn’t real.

When AI-powered bots complete form fills, install apps, and complete purchase events that get logged as real conversions, the compounding effect is worse than the initial waste. It shifts budget toward channels that appear to be performing but aren’t.

Verification standards in newer ad environments like Connected TV and digital audio are still catching up and we’re seeing prevalence of ad fraud in these areas.

In 2024, DoubleVerify uncovered CycloneBot, an ad fraud scheme that spoofed extended viewing sessions closely mirroring how real users watch TV. The estimated impact was up to $7.5 million per month for unprotected advertisers, dwarfing some of the biggest ad fraud schemes.

We’ve also seen similar automation applied to digital audio through schemes like BeatSting, which generated fake listening events to siphon advertiser spend.

If you run Google Performance Max or Meta Advantage+ campaigns, you’re already exposed to AI ad fraud, whether you know it or not.

These campaign types automatically place your ads across Google’s and Meta’s full inventory networks. That means your budget flows to placements you never selected, including the kinds of AI-generated MFA sites and fraudulent apps described above.

You won’t see a flag in your dashboard. In fact, the metrics from these placements may look great: high impressions, reasonable CTR, steady click volume (remember, AI-generated sites are built specifically to game these engagement signals).

The real tell is your downstream performance.

As LLMs continue to develop, we’re still discovering the full extent of how fraudsters are using them. But the execution is largely the same regardless of the architecture, industry, or channel. Modern detection is about understanding what normal traffic looks like, and spotting the signals that don’t fit the pattern.

Here are the tell-tale signs to watch for:

There’s no single fix for AI ad fraud. The schemes are too varied, and they’re evolving too fast for any one defense to provide comprehensive protection. But you can use a layered approach and combine the right tools with tighter operational habits to reduce your exposure.

Platform-level filters catch some invalid traffic, but they’re not designed to stop ad fraud this sophisticated. Dedicated click fraud protection tools, on the other hand, are built for exactly this purpose. They analyze every click in real time, evaluating behavioral signals, device fingerprints, IP reputation, and dozens of other signals to catch what Google and Meta’s built-in systems miss.

The more intermediaries there are between you and your ad placement, the more opportunities bad actors have to add fraudulent placements. Prioritize direct buys and private marketplace deals where possible, and regularly audit your placement reports for low-quality or suspicious domains.

Review your Google Ads placement reports regularly and exclude domains with high impressions but low engagement. We previously published a list of MFA websites to avoid. But as we’re learning with schemes like Genisys, bad actors can spin up dozens of new websites very easily. As a result, we recommend avoiding MFA sites completely, even if they look legit at first glance.

Read more: What Is Click Fraud? A Complete Guide: Understand the full landscape of click fraud, from bot traffic to competitors clicking, and how it impacts your ad spend.

Automated campaigns rarely flag fraud. Instead, it’s important to regularly monitor metrics like CTR vs conversion rate, geo distribution, timing patterns, and affiliate quality. Catching anomalies early here can save your budget and future campaigns.

AI ad fraud isn’t a future problem. It’s happening now, and it’s targeting the same Google Ads and Meta Ads campaigns you’re running today.

The schemes we’ve covered in this article (from AI-generated MFA sites hijacking PMax placements, to bots sophisticated enough to fake conversions) represent a very different challenge from the click fraud advertisers dealt with even two years ago.

Platform-level filters weren’t built to catch this. That’s where Fraud Blocker comes in.

Our platform monitors every click on your ads in real time, analyzing dozens of signals including device fingerprints and engagement signals to distinguish genuine users from fraudulent traffic. When fraud is detected, that bot user is automatically added to your exclusion lists stopping the same sources from hitting your budget again.

Ready to see how much invalid traffic is costing your campaigns? Start your 7-day free trial today.

AI ad fraud is the use of artificial intelligence or LLMs to automate, scale, and conceal fraudulent activity in digital advertising. Fraudsters use AI to create convincing (but fake) websites, generate semi-realistic bot behavior, and adapt their tactics, often faster than traditional rule-based defenses can respond.

Watch for spikes in clicks or impressions that don’t translate into conversions, unusually fast click-to-install times, sudden traffic from geographies you don’t target, or traffic above your frequency cap. AI makes these signals harder to spot because bots are designed to mimic normal user patterns.

No. Google has basic ad filters and refunds advertisers for some invalid clicks, but its filters are tuned to protect its own platform, not to block sophisticated fraud. This is why solutions like Fraud Blocker have caught fake traffic in campaigns that Google Ads missed entirely.

Traditional click fraud is relatively simple: competitors clicking your ads, or basic bots generating empty traffic. AI-powered click fraud, on the other hand, can mimic real user behavior down to mouse movements and scroll patterns, making it significantly harder to detect and more damaging if left unchecked.

ABOUT THE AUTHOR

Matthew is the resident content marketing expert at Fraud Blocker with several years of experience writing about ad fraud. When he’s not producing killer content, you can find him working out or walking his dogs.

Matthew is the resident content marketing expert at Fraud Blocker with several years of experience writing about ad fraud.